Science News

Whether it’s the secrets buried deep beneath the Earth’s surface or the ones lurking in the far reaches of outer space, new scientific discoveries come to light every single day. And for the most fascinating and latest science news, All That’s Interesting is the place to look.

From the ancient past come science stories like that of the lost continent of Lemuria and the astounding dinosaur unearthed in a mummy-like state with skin and guts still intact. And from our present day come stories like that of the Bajau people, the sea nomads of Southeast Asia, and the body farms that are revolutionizing our understanding of death.

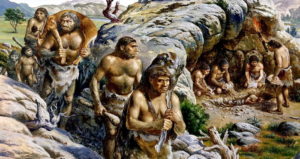

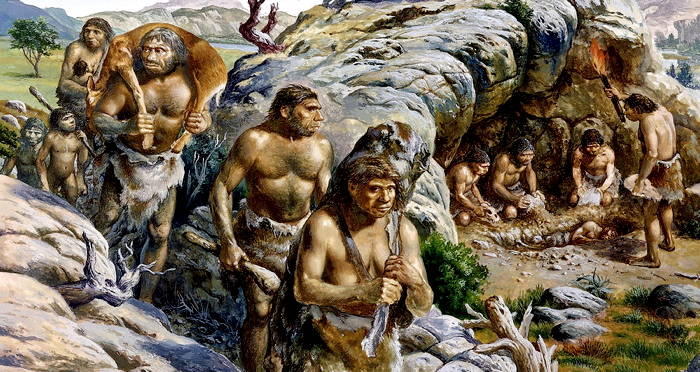

Meanwhile, the animal kingdom offers up interesting science news like reports of killer whales eating and mutilating great white sharks as well as stories of the creatures surprisingly thriving in Chernobyl’s radioactive exclusion zone. At the same time, human-centric stories are upending everything that scientists thought they knew about our own shared past.

Be it human or animal, past or present, underground or outer space, the latest science news stories and scientific discoveries are continually reshaping our world and reminding us of both how much we’ve discovered and how much we have yet to explore.

For all the more interesting science news that you need to know, explore All That’s Interesting’s collection of the latest stories below.