The TikTok deepfakes are realistic and disturbing, and run the risk of re-victimizing murder victims' families.

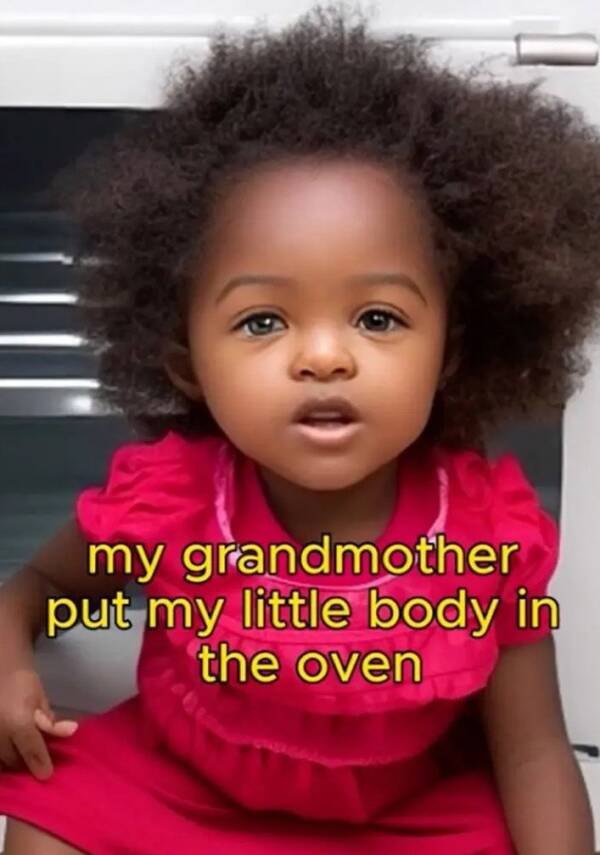

TikTokOne of the A.I. generated deepfakes of a child murder victim.

True crime is an increasingly popular subject today. But some accounts on TikTok have taken society’s interest in true crime to a disturbing level by creating realistic A.I. generated videos of murder victims — often young children — and having them discuss how they were killed.

“They’re quite strange and creepy,” Paul Bleakley, an assistant professor in criminal justice at the University of New Haven, told Rolling Stone. “They seem designed to trigger strong emotional reactions, because it’s the surest-fire way to get clicks and likes. It’s uncomfortable to watch, but I think that might be the point.”

Accounts like @truestorynow and Nostalgia Narratives have tens of thousands of followers and post realistic videos of murder victims calmly describing what happened when they were murdered. Some show famous adult victims, like George Floyd, but many depict young children.

“Grandma locked me in an oven at 230 degrees when I was just 21 months old,” one of the A.I.-generated deepfakes posted on @truestorynow said in a brief clip. Identified as Rody Marie Floyd in the clip, the generated girl in the video tells the real story of Royalty Marie Floyd, a 20-month-old who was stabbed and then burned in an oven by her grandmother in 2018.

“Royalty Marie Floyd was the best thing that ever happened to me,” her mother wrote on Facebook after her death. “She’s my one and only daughter. My first love. The hardest thing that I ever had to go through in my life. My heart has been ripped from my chest.”

Clips like the one of “Rody” have millions of views on TikTok.

TikTokAn expert described the clips as “strange and creepy” and “uncomfortable to watch.”

Rolling Stone reported that accounts like Nostalgia Narratives claim that they do not use actual photos of the victims to generate the deepfakes out of “respect [for] the family.” However, using the images is also forbidden on TikTok. The platform’s community guidelines ban deepfake depictions of private individuals or young people.

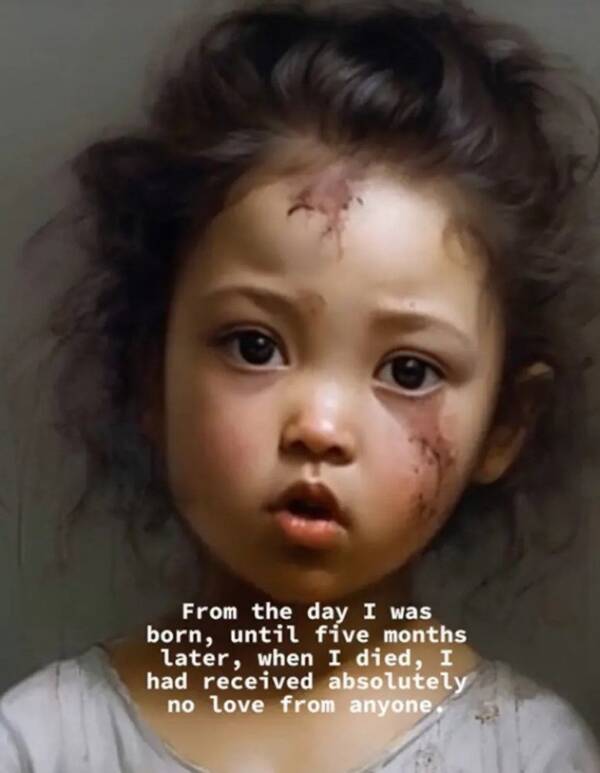

Regardless of whether the photos are real or not, the story is. And experts like Bleakley believe that content like this — used without the family’s permission — ultimately hurts murder victim’s loved ones.

“Something like this has real potential to revictimize people who have been victimized before,” Bleakley told Rolling Stone. “Imagine being the parent or relative of one of these kids in these AI videos. You go online, and in this strange, high-pitched voice, here’s an AI image [based on] your deceased child, going into very gory detail about what happened to them.”

TikTokThe clips have the potential to revictimize murder victim’s loved ones.

For the moment, videos like these are completely legal. There is no federal law that explicitly bans nonconsensual deepfake images and videos. And though families of murder victims might find the deepfakes upsetting, there’s no clear legal recourse for them to take.

What’s more, the A.I. clips of murder victims that appear on TikTok now could only get worse. As Rolling Stone notes, nothing is stopping content creators from recreating crimes using artificial intelligence, down to each grisly detail.

“This is always the question with any new technological development,” Bleakley said. “Where is it going to stop?”

TikTok has acted to stem the tide of murder victim deepfakes. Rolling Stone reports that @truestorynow, which had nearly 50,000 followers, has been taken down. Across the platform, however, other deepfakes are still easy to find.

After reading about the disturbing A.I. deepfakes of murder victims that have started to spread on TikTok, discover the story of Claire Miller, the TikTok star who murdered her disabled sister. Or, see how TikTok users in Pakistan allegedly started dangerous forest fires in order to create dramatic videos.